Introduction

GPT or Generative Pre Trained transformed is a Large Language Model or LLM. One of the things it can do is interact and generate human like content/text. LLM is basically a type of Foundation model. So the obvious question is what are Foundation models? Foundation models (Fm’s) are pre trained on large amounts of unlabeled data and self supervised data. Seems confusing, isn’t it? “Large” in LLM’s literally refers to tens of thousands of gigabytes in size and data.

Their learning is base on patterns in the data in a way that produces a general output that can be transformed. LLM’s are subsets of Fm’s having a niche around texts and for text like stuff. LLM’s are trained on large datasets such as conversations, articles and books. A parameter is a value the model can change independently as it learns, and the more parameters a model has, the more complex it can be. Currently LLM’s are being employed in various scenarios spanning across voice recognition, text to image conversions, email filtering, web searches and so on.

Architecture

A model’s arrangement of neurons and layers dictates the base architecture in Machine learning. Different architectures capture various relationships in the data, emphasizing specific components during training. Consequently, the architecture shapes the tasks the model excels at and the quality of the output it produces.

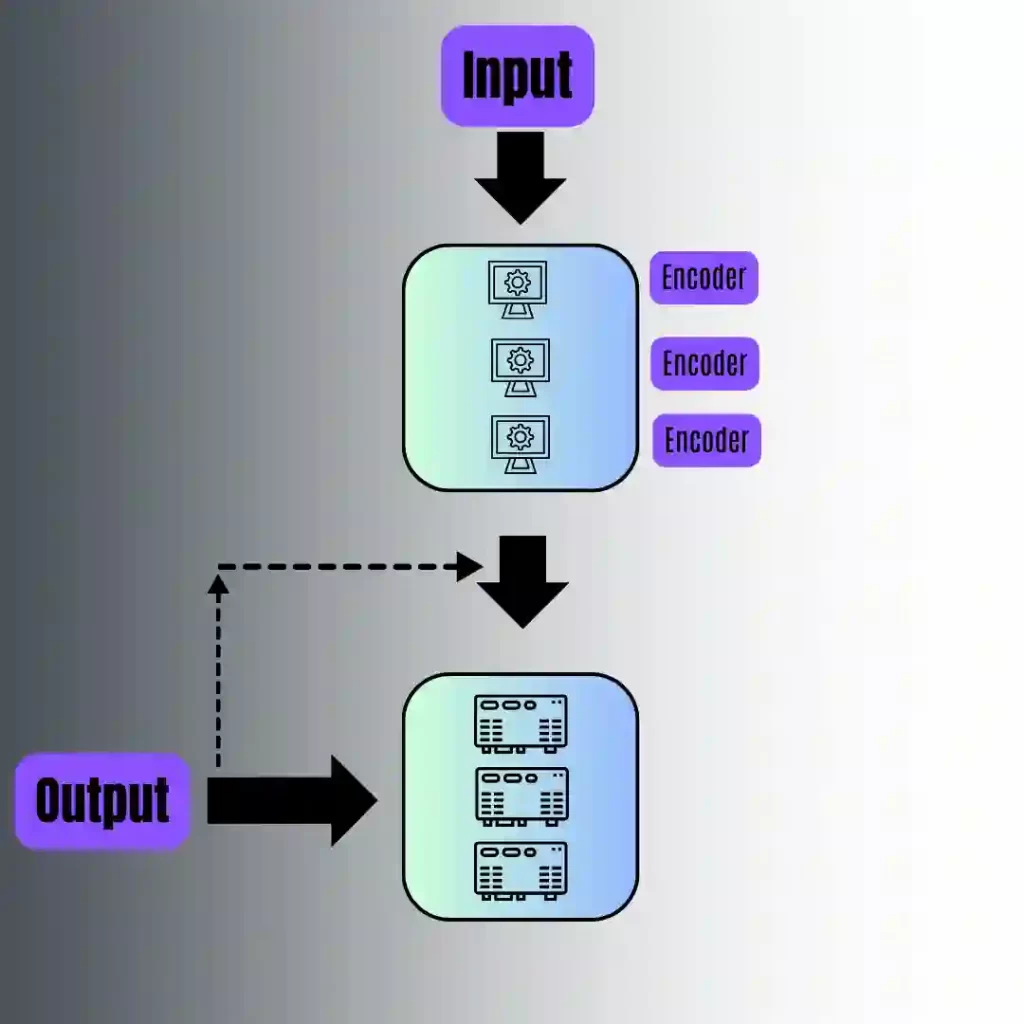

Encoder-Decoder

There are obviously 2 components in this kind of an architecture. Encoder grabs the input data and morphs it into an abstract continuous representation that cleverly captures the essence of the original input. Decoder transforms the continuous representation into understandable outputs while also incorporating its previous outputs. The encoding and decoding process allows the model to handle complex language tasks efficiently, leading to a more coherent response. This dual-process architecture excels in generative tasks like machine translation and summarizing text, where understanding the full input is crucial for output generation. However, it may slow down during inference due to the need to process the complete input upfront.

Encoder Only

Popular models such as BERT and RoBERTa utilize encoder-only architectures. These models transform input into rich, contextualized representations without directly generating new sequences. BERT, for instance, is pre-trained on extensive text corpora using two innovative approaches: masked language modeling (MLM) and next-sentence prediction. MLM works by hiding random tokens in a sentence and training the model to predict these tokens from their context. This way, the model understands the relationship between words in both left and right contexts. This “bidirectional” understanding is crucial for tasks requiring strong language understanding, such as sentence classification (e.g., sentiment analysis) or filling in missing words.

Decoder Only

Decoder-only architectures generate the succeeding part of the input sequence relying on the previous context. Unlike encoder-based models, they lack the ability to grasp the complete input but shine in predicting the next likely word. Consequently, Decoder-Only models exhibit greater “creativity” and “open-ended” in their output. Activities like creative writing, story completion etc are apt for this token by token generation of output.

Mixture of experts (MOE)

MoE, used by models like Mistral 8x7B, breaks away from traditional Transformers and thrives on the insight that a single, bulky language model can be split into smaller, specialized sub-models. Picture a savvy gating network acting as the ringmaster, smartly divvying up tasks like switching input tokens among these sub-models. Each sub-model then hones in on different facets of the input data, making the whole system a well-oiled, specialized machine. This approach lets MoE show off its muscles in handling all sorts of complex tasks with different needs. The whole point of this architecture is to pump up the number of LLM parameters without breaking the bank on computational power.

LLM Examples

- GPT-3: GPT stands for Generative Pre-trained Transformer, and this is the third version of such a model, hence the number 3. Developed by OpenAI, you might know it as ChatGPT, which is simply the GPT-3 model in action.

- BERT: BERT stands for Bidirectional Encoder Representations from Transformers. Google developed this large language model, which is widely used for various natural language tasks. It can also generate embeddings for specific text to help train other models.

- RoBERTa: RoBERTa stands for Robustly Optimized BERT Pretraining Approach. In the quest to enhance the performance of transformer architecture, Facebook AI Research developed RoBERTa as an improved version of the BERT model.

LLM Use cases

- Code Generation: One of the most mind-bending applications of this service is its ability to generate highly accurate code for a specific task based on the user’s description to the model.

- Debugging and Code Documentation: If you find yourself in a coding conundrum, ChatGPT can come to your rescue by pinpointing the lines causing issues and providing remedies. Say goodbye to hours spent on project documentation – ChatGPT can handle that for you too.

- Question Answering: Just like back in the day with AI-powered personal assistants, feel free to throw all sorts of wacky and genuine questions at ChatGPT.

- Language Transfer: Supporting over 50 native languages, ChatGPT can seamlessly translate text and help you polish up your content by correcting grammatical mistakes.

Conclusion

In conclusion, Large Language Models (LLMs) revolutionize natural language processing with their immense capacity for generating diverse and contextually-rich text. Their versatility in tasks like language understanding and generation showcases their power in handling complex linguistic challenges. However, ongoing research is crucial to enhance their capabilities further and address potential biases for ethical and unbiased language processing.